Augmentiary: Exploring LLM-Based Interpretive Support for Meaning-Making in Reflective Journaling

1 Overview

- Augmentiary is a project that explores how LLMs can support journaling not just as a way of recording events, but as a process of interpreting experience and constructing personal meaning. To this end, I designed and implemented a reflective journaling system that offers interpretations with concrete meaning while allowing users to retain ownership over their voice and the interpretation of their experiences.

- I first conducted a three-day formative study to derive key design considerations, and then designed the Augmentiary system based on those findings. I subsequently carried out a four-week field deployment study to examine how AI-supported interpretation functioned in actual journaling and self-reflection practice.

- Role: end-to-end research design and execution, system and interaction design, generative pipeline design, web-based system implementation through vibe coding, four-week live deployment and operation, interview-based qualitative analysis.

2 Context

People do not simply store the events they go through in life. They make sense of themselves by interpreting why those events mattered, what they meant personally, and how they connect to the life they have lived and the life they are moving toward. This project focused on this process of meaning-making as a core value of journaling. Because journaling turns experience into language and invites people to revisit it, it can become an important practice for weaving the past, present, and future into a coherent personal narrative.

However, journaling does not automatically lead to insight. Simply writing down what happened often does not go far enough to reveal how an experience relates to one’s values, identity, or prior experiences. In many cases, journaling remains a monologic record rather than developing into a reflective process that considers multiple possible interpretations of what an experience could mean. Reflection can also slip into rumination, where negative thoughts are repeatedly rehearsed rather than meaningfully worked through.

Against this backdrop, HCI research has treated journaling not only as a tool for storing records but as an interactive environment that can support revisiting and reconstructing experience. Prior work has explored ways of encouraging reflection through visualizing emotional patterns, offering prompts, or rearranging past records. Yet the burden of making connections and constructing interpretations still largely remains with the user. Helping people record more effectively is not the same as helping those records lead to deeper meaning-making.

At the same time, emerging research has begun to explore how AI can support reflection by transforming personal data into other forms such as images, poetry, or reconstructed narratives, so that users can discover new meanings through them. These approaches are valuable because they keep interpretation open-ended, but they leave unresolved what role concrete textual interpretive suggestions might play in everyday, reality-based journaling. LLMs are especially promising because they can suggest alternative perspectives and potential insights within the same linguistic medium as journaling itself. At the same time, they carry important risks: users may offload interpretive effort onto AI, or accept AI-generated language as overly authoritative.

In this study, we explore ways to support deeper self-reflection through journaling with LLM-generated output as interpretive feedback for meaning-making, while preserving users’ agency and voice.

This study is guided by the following research questions:

- RQ1: What are the design considerations to offer LLM-generated interpretive feedback to facilitate meaning-making in journaling?

- RQ2: How do people experience an LLM-based reflective journaling system with interpretive feedback designed with these considerations in reflective journaling?

3 Approach

3-1 Research Structure

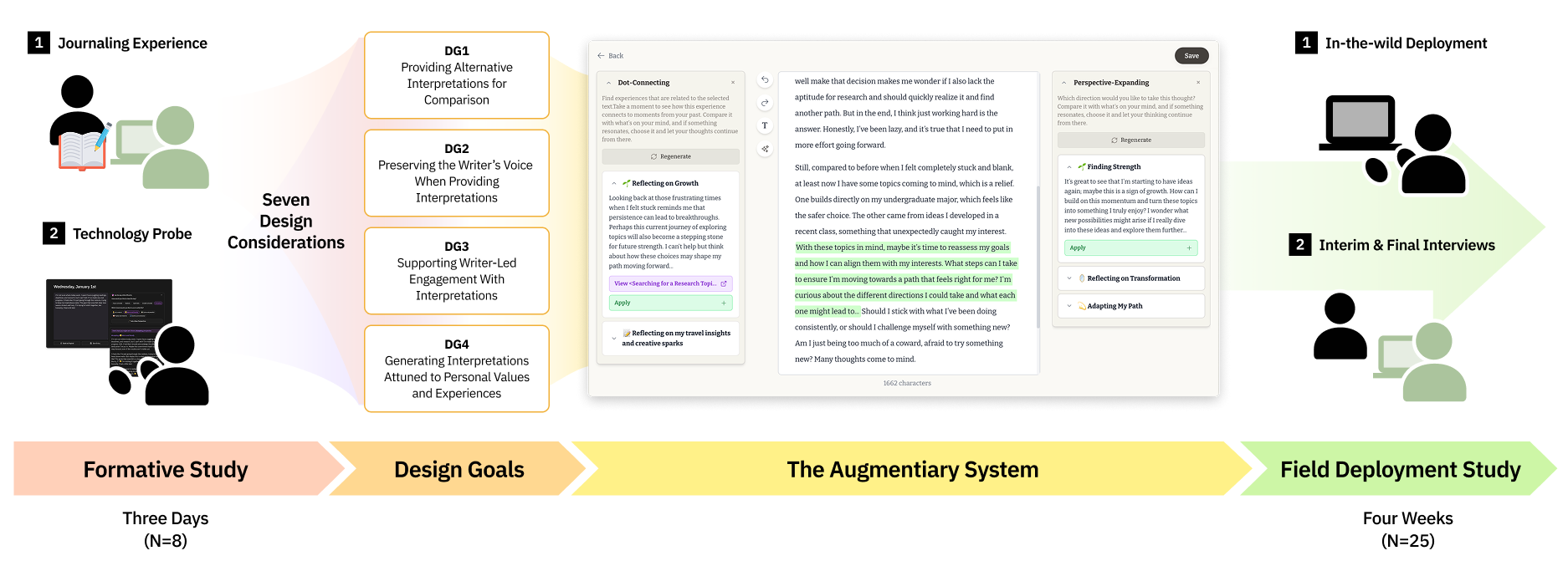

The research was conducted in two stages. First, I carried out a three-day formative study with eight participants who already had regular journaling habits, in order to explore what kinds of value and limitations emerge when AI inserts interpretations directly into journal text. I then distilled the findings into four design goals, implemented Augmentiary accordingly, and conducted a four-week field deployment study with 25 participants to qualitatively analyze the lived experience of using the system for reflection.

Overall research flow, from deriving design considerations in the formative study to system design and the four-week deployment study.

Overall research flow, from deriving design considerations in the formative study to system design and the four-week deployment study.

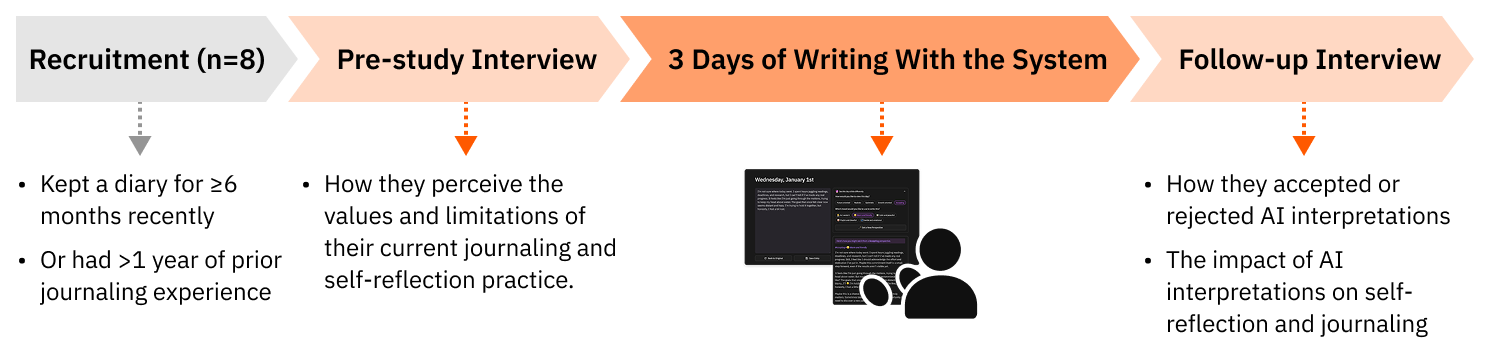

3-1 Deriving Design Considerations Through a Technology Probe

Formative Study

Overview of the formative study conducted to derive design considerations.

Overview of the formative study conducted to derive design considerations.

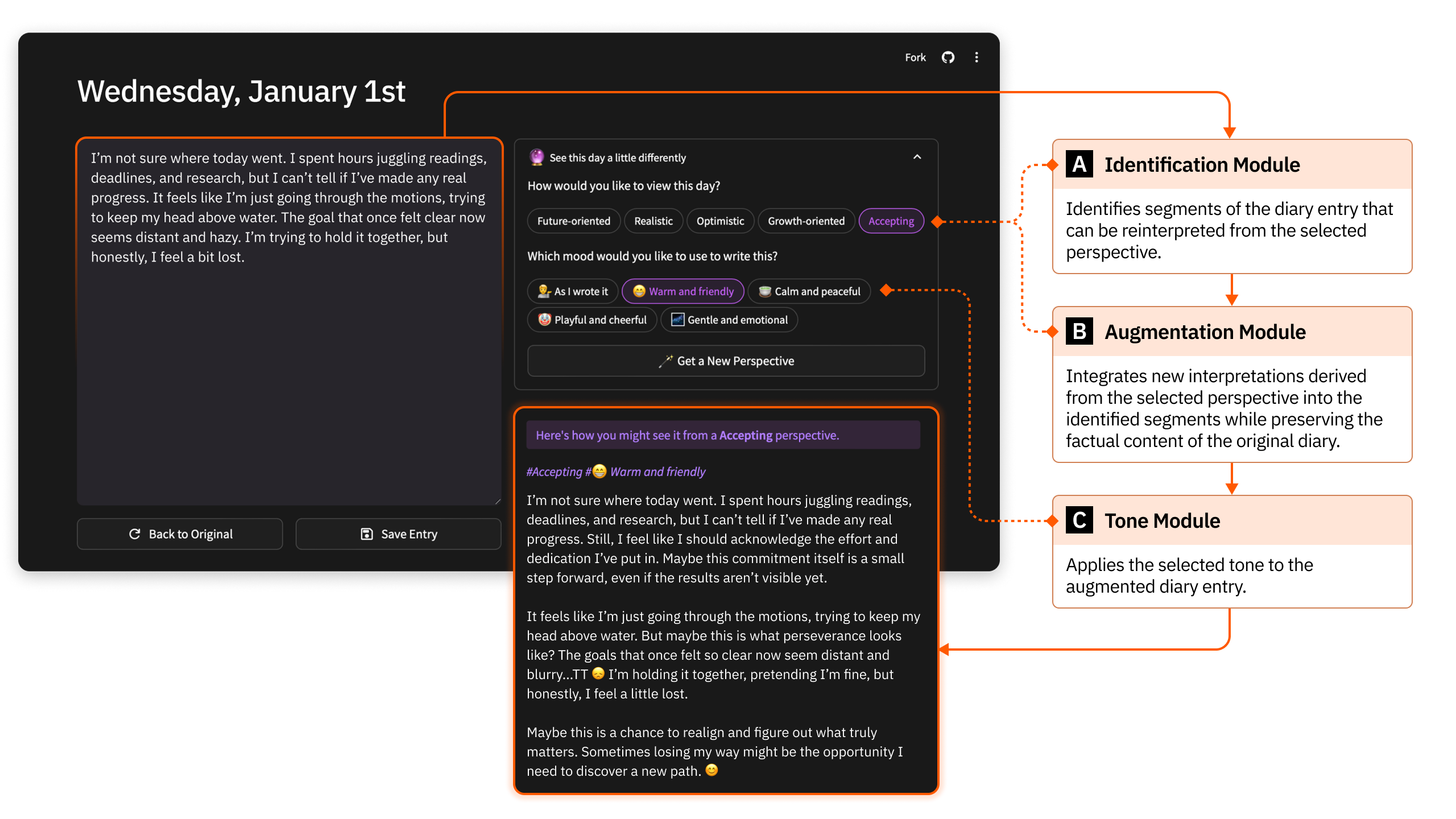

To conduct the formative study, I developed and deployed a technology probe. Technology probes are useful for understanding what users want in real contexts of use, evaluating technology in the wild, and generating inspiration for future design directions.

In the probe, when users wrote a journal entry, AI inserted interpretive sentences into the text according to a selected perspective and tone. The probe was not intended to validate a finished product. Rather, it was used to reveal what users found meaningful, and where they felt discomfort, when AI-generated interpretations entered the flow of journaling.

Interaction flow of the technology probe used in the formative study.

Interaction flow of the technology probe used in the formative study.

I recruited eight participants who had either written in a diary at least once a week over the previous six months or had maintained a sustained journaling habit for more than a year. After a pre-study interview, participants used the probe for three days and then took part in a follow-up interview. Based on a total of 770 minutes of interview data, I conducted open coding and thematic analysis.

Findings from the Formative Study: Seven Design Considerations

The formative study surfaced seven considerations for designing AI-supported interpretation in journaling. These were later consolidated into Augmentiary’s design goals.

-

Keep users engaged in writing itself

Participants felt that writing helped them gain emotional distance and organize scattered thoughts. Accordingly, interpretive support should encourage continued writing rather than passive reading.

-

Present alternative interpretations that can be compared

Participants reflected more deeply when multiple interpretive options were placed alongside their own writing, instead of being given a single “correct” interpretation.

-

Avoid distorting the user’s voice

When AI altered facts or intentions, participants immediately rejected it. Interpretations therefore needed to be added to the original writing rather than replacing it.

-

Support selective uptake and revision

Participants wanted to take only the useful parts of AI suggestions and rewrite them in their own language. This selective process was central to maintaining authorship.

-

Make AI’s contribution clearly distinguishable

When the boundary between AI-generated text and user-written text became blurred, the sense of authorship weakened. The interface therefore needed visual devices that clearly marked AI contributions.

-

Generate interpretations that resonate with personal values and context

Interpretations felt persuasive not when they sounded generally encouraging, but when they reflected the user’s values, disposition, and lived context.

-

Connect past records with present experience

Participants were able to construct richer meaning when current emotions and events were connected with past entries and folded into a longer personal narrative.

3-3 Design and Implementation of Augmentiary

Design Goals

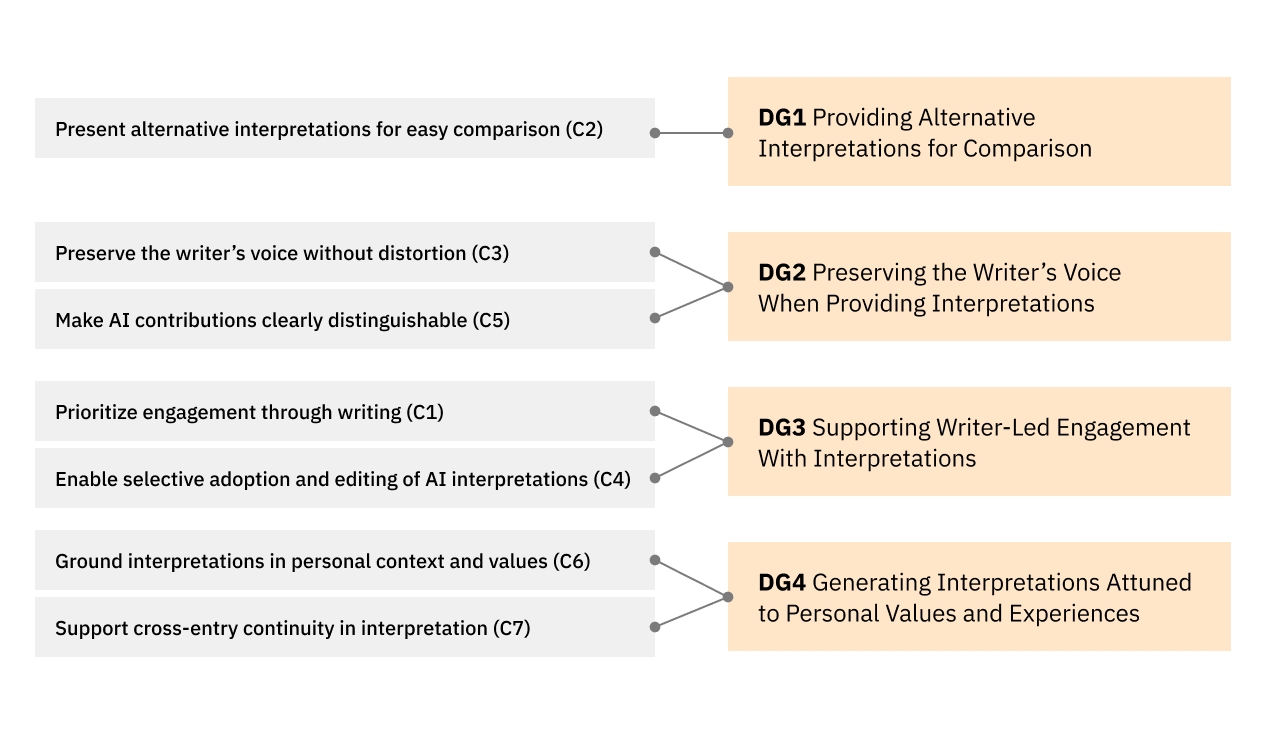

Design goals derived from the seven design considerations.

Design goals derived from the seven design considerations.

Based on the seven design considerations identified above, I defined four final design goals.

-

DG1Providing alternative interpretations for comparison rather than a single answerInstead of presenting one fixed conclusion, the system was designed to let users compare multiple interpretive possibilities side by side and form their own stance through comparison.

-

DG2Preserving the writer’s voice and preventing distortionThe system was designed so that AI does not replace the original text, but instead adds interpretations that users can adjust while keeping their own writing at the center.

-

DG3Supporting writer-led engagement through user-controlled interventionUsers were given control over when to invoke AI, which suggestions to accept, and how to revise them.

-

DG4Generating interpretations attuned to personal values and experiencesThe system was designed to consider not only the currently selected passage, but also the user’s values, traits, and past experiences to generate more personally attuned interpretations.

Interaction Design

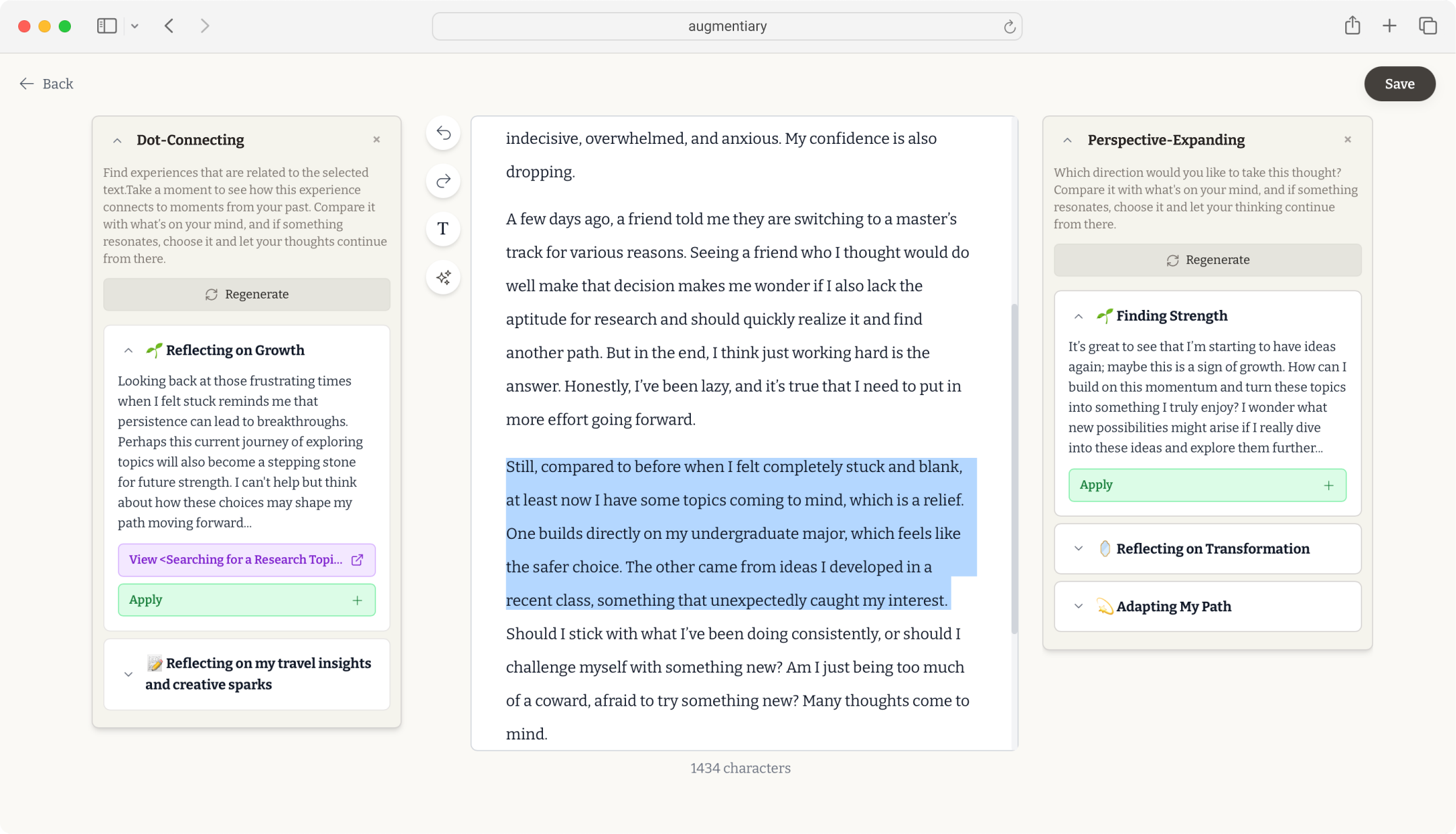

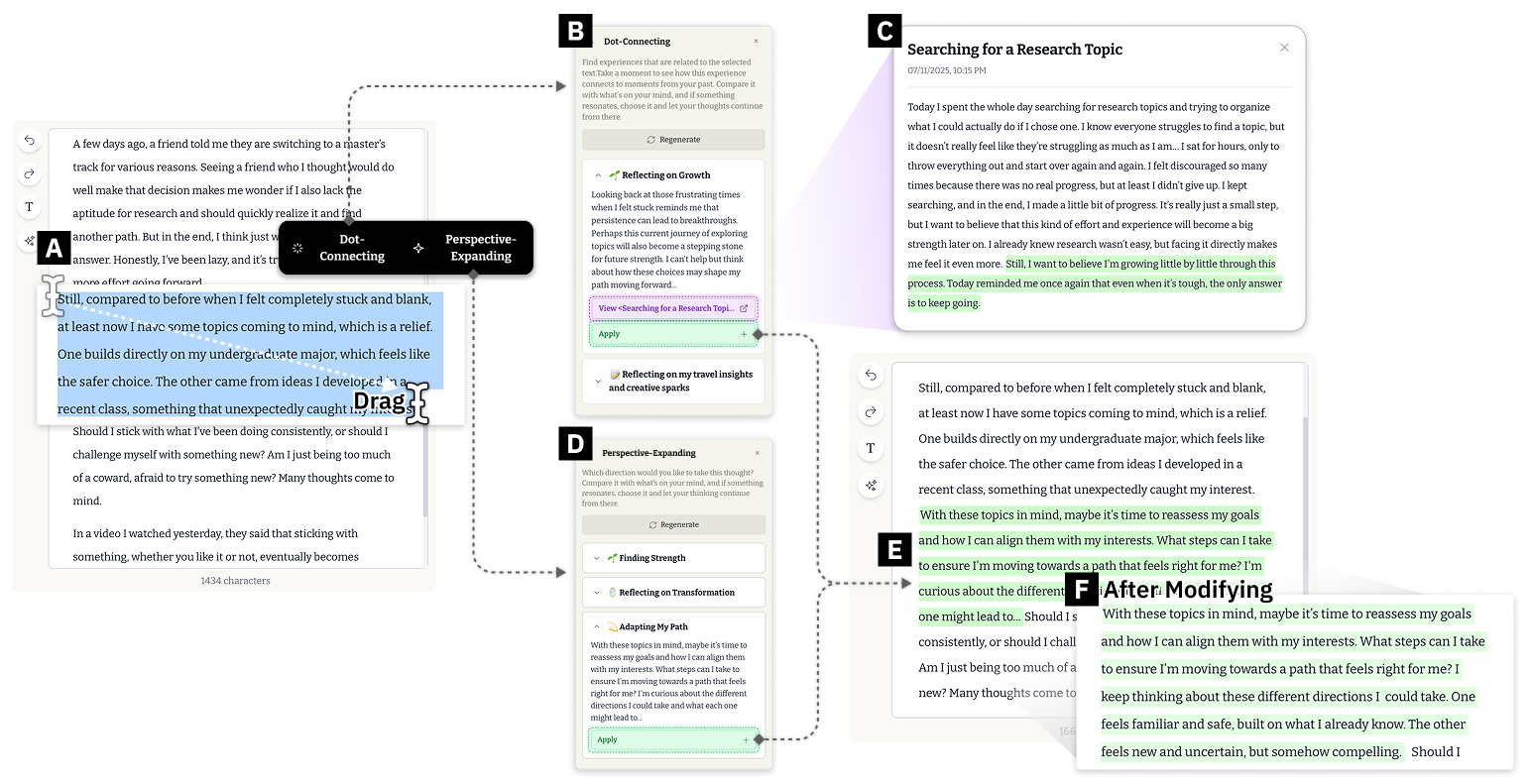

Core interaction flow of Augmentiary.

Core interaction flow of Augmentiary.

The interaction design of Augmentiary was structured to let users continue the flow of journaling, add interpretation only when needed, and then reintegrate it into their own writing.

- Writer-led intervention: Users first describe their experiences and emotions freely in the central editor. If there are passages they want to expand on or think through further, they can directly select those passages to invoke the AI interpretation feature. The feature remains hidden until users deliberately select text, and it becomes available only after a sufficient amount of writing has been produced. This design preserves the baseline flow in which users first articulate their own thoughts.

- Personalized interpretive generation: The system provides two reflective modes.

- Perspective-Expanding presents candidate interpretations that reflect different meaning-making approaches for the currently selected passage.

- Dot-Connecting helps users connect their present experience with relevant past journal entries and broader autobiographical context.

- Multiple interpretations instead of one answer: Rather than offering a single authoritative response, the AI generates up to three candidate interpretations with distinct possible meanings. Users can compare them side by side and decide which one resonates more. In this process, they do not simply receive AI-generated text. They move back and forth between their own thoughts and the AI’s suggestions, reconsidering the meaning of the present experience.

- Non-destructive insertion: AI-generated interpretations do not overwrite the original journal entry. They are inserted directly after the selected passage and are visually distinguished with a highlight similar to a marker pen.

I also paid close attention to what happens after AI suggestions are inserted into the writing. Rather than allowing AI to complete the writing on the user’s behalf, the system was designed so that the user remains the one who thinks, revises, and writes the interpretation forward.

- Intentional incompleteness: Every AI-generated interpretation inserted into the journal ends with an ellipsis (“…”), leaving it deliberately unfinished. Users are therefore invited to continue the sentence themselves or revise it into their own language. This makes the AI-generated text function not as a completed conclusion, but as a scaffold the user can complete through their own thinking and words.

- Visible traces of editing: The highlighted background of inserted AI interpretations gradually fades as the user edits them more extensively. This encourages users to transform the text into their own language and visually reveals the process by which AI suggestions are gradually absorbed into the user’s writing.

Key Features and Generative Pipeline

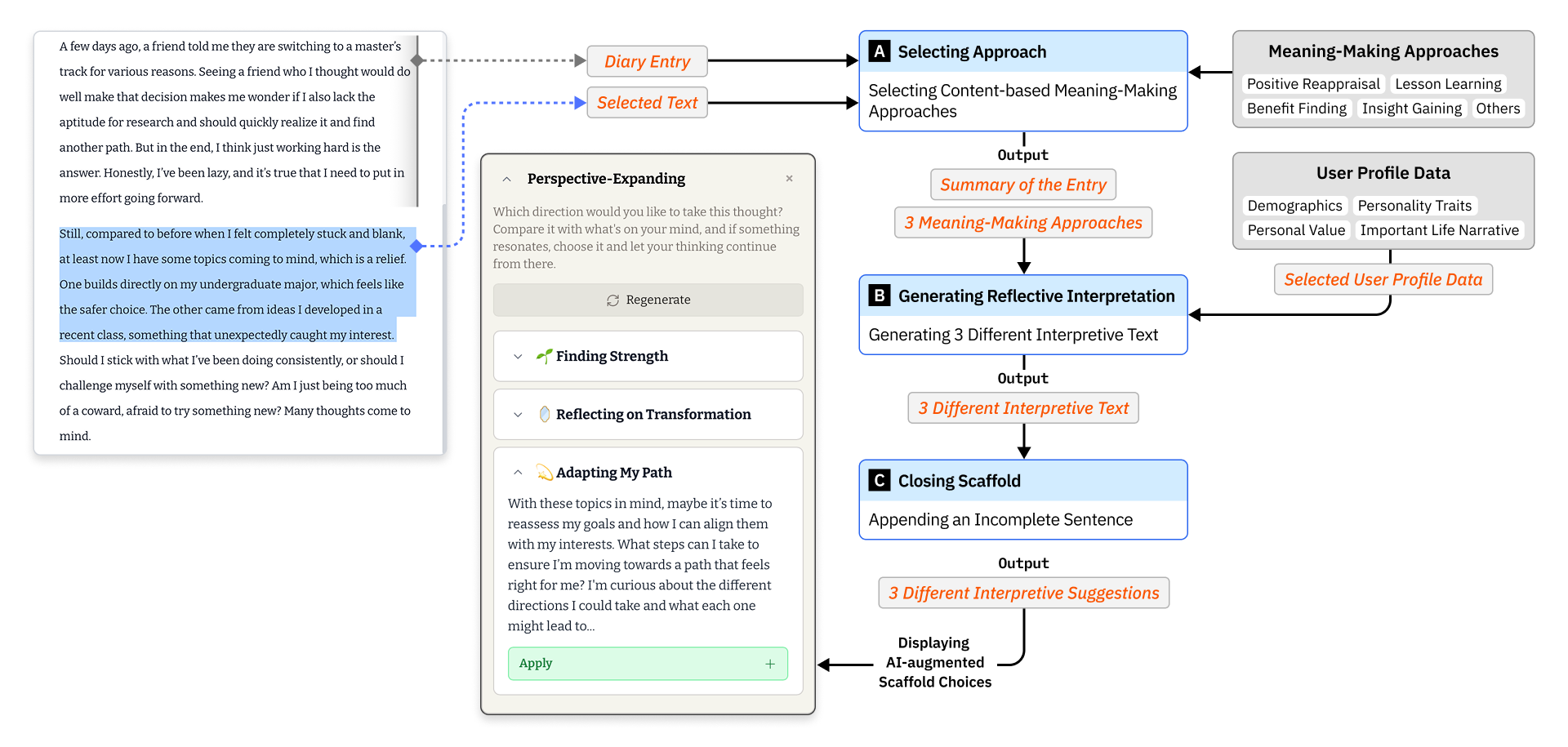

Perspective-Expanding selects three meaning-making approaches from a curated set of nine, based on what best fits the current context, and generates distinct first-person candidate interpretations from them. This feature helps users reconsider a present experience from multiple directions rather than remaining within a single perspective.

Overview of the Perspective-Expanding generative pipeline.

Overview of the Perspective-Expanding generative pipeline.

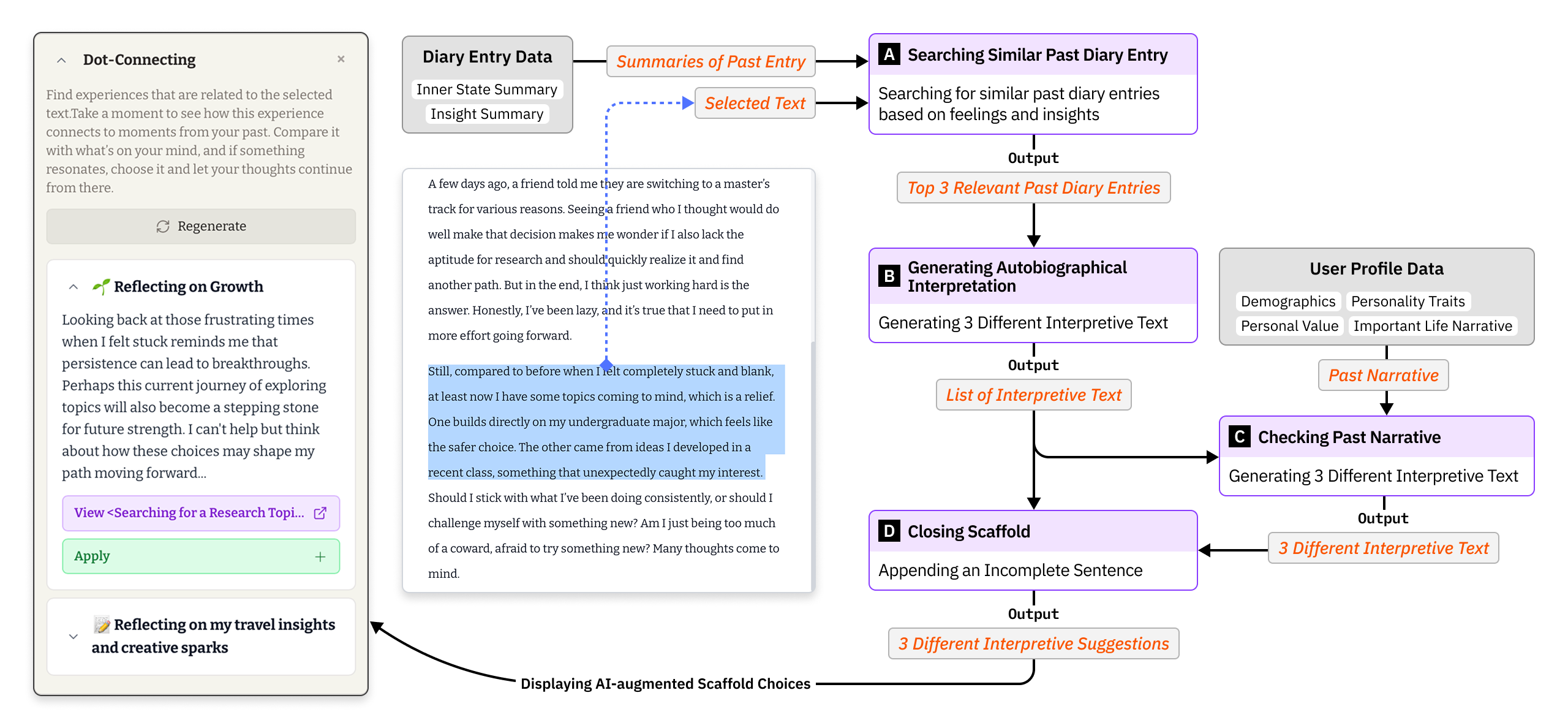

Dot-Connecting retrieves summaries of past journal entries relevant to the currently selected passage and generates interpretations that connect the current experience to prior experiences. When there are not enough relevant prior records, the system refers to representative past experiences from the user profile to attempt broader contextual connections. In this way, it helps users understand present events and emotions within a longer autobiographical narrative.

Overview of the Dot-Connecting generative pipeline.

Overview of the Dot-Connecting generative pipeline.

In both features, the outputs are not presented as completed conclusions. Instead, they are offered as short first-person interpretive suggestions that users can continue writing from. This reflects the system’s core stance: AI does not resolve meaning on the user’s behalf, but supports the user in developing interpretations through their own language.

Implementation

Augmentiary was implemented as a web-based platform.

- The front end was built using Next.js, React, Tailwind CSS, and the TipTap editor.

- The back end used Supabase to manage user data and journal records.

- The generative pipeline used the OpenAI GPT-4o-mini ChatCompletion API to generate candidate interpretations for the user-selected passage.

3-4 Four-Week Field Deployment Study and Key Findings

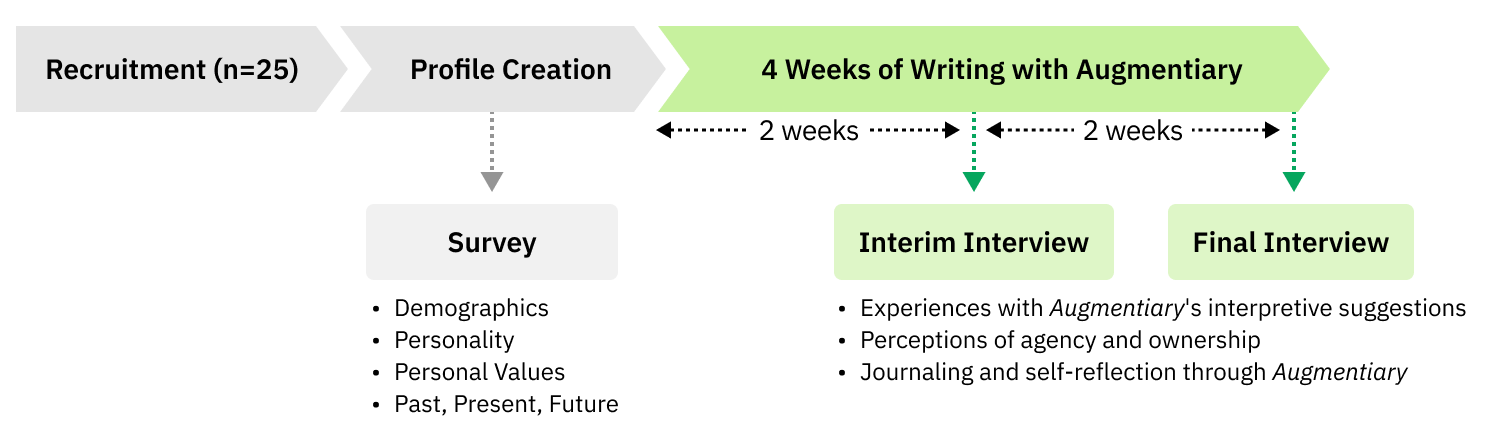

The deployment study was conducted over four weeks in everyday life rather than in a lab, based on the premise that journaling is fundamentally a personal practice embedded in daily routines. The study involved 25 participants with an average age of 25.32, and 23 of them reported being familiar or very familiar with LLMs. Participants were recruited from people with intrinsic motivation for journaling and self-reflection. Before using the system, they provided demographic information, personality traits, personal values, and important life narratives, and then used the system in their everyday settings. Interim interviews and final interviews were conducted at the end of weeks 2 and 4, respectively.

Overview of the field deployment study using Augmentiary.

Overview of the field deployment study using Augmentiary.

Findings

Finding 1 AI interpretations were experienced not as answers, but as reference points and objects of productive comparison.

- Participants did not treat AI suggestions as fixed conclusions. Instead, they treated them as reference points to compare against their own thoughts. Even when they did not directly incorporate a suggestion into their writing, they often used it as raw material for reflection. When interpretations were presented as concrete sentences, they helped participants step back from their immediate narrative, notice blind spots they could not see while emotionally immersed, and revisit their experiences from a different angle.

- Positive reframing was experienced ambivalently. Many participants felt that the AI’s positive language helped interrupt cycles of negative thinking and move them toward a more balanced perspective. At the same time, some resisted suggestions that felt overly optimistic or premature relative to the weight of the situation.

- Suggestions that did not resonate sometimes played a productive role. One of the most interesting findings was that deeper reflection emerged not only when the AI offered a fitting interpretation, but also when it offered one that felt somewhat off. When participants encountered overly positive reframings or suggestions that felt misaligned with context, they often reacted against them. Yet this very act of resistance helped them clarify what they actually felt and what direction they wanted their thinking to take. In this sense, even imperfect suggestions sometimes helped participants articulate their real position more clearly.

Finding 2 Participants were able to maintain agency through selection, revision, and rejection, while also revealing tensions beneath that agency.

- Participants felt agency because they themselves first built the foundation of the text, decided when to invoke AI, and chose which suggestions to use. Rather than simply following AI output, they often took only useful fragments, rewrote them, and added their own context. In this process, AI suggestions were treated not as authoritative answers, but as workable material.

- The fading highlight and incomplete sentence form encouraged active engagement. Participants said the highlight gave them an intuitive sense of how much of the text still reflected AI’s contribution, while the ellipsis at the end of each suggestion acted as a gentle prompt to continue writing. Some participants even described the fading highlight itself as motivating them to keep writing and make the text more their own.

- Over time, however, some participants also began to expect a more context-aware and proactive AI. Because the system responded only to passages users explicitly selected, and because users could choose only the suggestions they wanted, some participants worried that this selectivity could eventually narrow reflection by encouraging them to seek only the comfort or agreement they already wanted.

Finding 3 AI interpretations contributed to thought expansion and self-understanding.

- Most participants reported that AI suggestions helped unblock and extend their thinking. By encountering alternative perspectives, they were able to move toward interpretations they might not have reached on their own. This often helped them understand themselves, other people, and their situations in more layered ways. Some participants also felt that the AI helped them arrive more quickly at directions of thought they would eventually have reached anyway.

- Connecting past entries with present experience expanded self-understanding into a broader autobiographical narrative. Participants recalled earlier thoughts and feelings, recognized that what they had considered isolated incidents were part of recurring patterns, and in some cases understood experiences from different periods as connected expressions of a broader tendency or life orientation.

- For some participants, these reflections also led to more concrete attitude and behavior changes. Participants reported feeling that previously vague life directions had become clearer, that anxiety had been eased, or that reflection had translated into small actions such as making checklists, starting exercise routines, or managing schedules more deliberately. At the same time, the Dot-Connecting feature had limitations in the early stage of use, when there was not yet enough accumulated journaling history and the resulting connections could feel repetitive or weakly relevant.

4 Discussion

The findings of this study suggest a new UX paradigm for applying generative AI in domains that deal with personal identity and self-reflection.

Treating AI interpretations not as conclusions, but as negotiable reflective material

In this project, AI-generated interpretations were not treated as answers that settle meaning on behalf of the user. Instead, they were designed as negotiable reflective material that users could place beside their own writing, compare against, partially adopt, rewrite, or reject. In the field deployment study, participants indeed treated the suggestions in this way, using them less as conclusions than as points of comparison within an inner dialogue. When interpretations were clearly articulated in sentence form, they helped participants grasp otherwise vague thoughts more concretely and step back from emotionally immersive thinking.

What proved especially important was that usefulness did not arise only from “good” or well-fitting interpretations. Even when a suggestion felt awkward or unwelcome, the process of reacting against it often helped participants clarify what they did not agree with and what direction they wanted their thinking to take. When multiple candidate interpretations were presented together, participants were not simply choosing the right answer. They were negotiating meaning between alternatives. For this reason, the project suggests that AI interpretations are better left provisional and revisable than presented as authoritative conclusions.

At the same time, simply providing interpretive sentences was not enough. Interpretations became meaningful only when users could respond to them, rewrite them, and situate them within their own context. This is why Augmentiary combined multiple candidate suggestions, editable insertion into the original text, and visual cues that reveal the degree of user revision. The key was not to have AI write a good sentence, but to leave behind concrete material through which users could think with themselves.

Sustaining a balance between user agency and AI intervention

Another central insight of the project was that what matters in self-reflection is not merely reducing AI intervention, but carefully designing when and how initiative shifts between the user and the AI. Augmentiary was designed so that users first wrote in their own words, selected specific passages, and invoked AI only at moments they themselves chose. This ensured that reflection always began from the user’s own narration, and that the direction of interpretation also remained under the user’s control.

At the same time, emphasizing agency alone was not sufficient. If users repeatedly request interpretation only in familiar ways, the system may end up reinforcing existing perspectives rather than expanding them. In fact, some participants raised concerns that a system that responds only to user-selected passages could narrow perspective over time. For this reason, the project suggests that the goal should not be perfect alignment alone. Slight mismatch or unfamiliarity can also function as a resource for reflection. Participants sometimes became clearer about their own position precisely by pushing back against interpretations they did not like, which shows that misalignment is not necessarily a failure.

From this perspective, the project points toward a user-led structure as the default, while still leaving room for more active AI intervention when appropriate. For example, if the system detects that a user repeatedly selects only one type of perspective, or that reflection has become stagnant, it might suggest a less frequently chosen perspective and explain why it is surfacing that suggestion. The goal is not for AI to take over, but to help prevent reflection from hardening in only one direction while preserving the user’s sense of control.

Treating connections across experiences not as simple similarity, but as personal significance

This project approached journaling not as a collection of isolated records, but as a process of connecting experiences across time to construct a personal narrative. Accordingly, the system was designed not only to reinterpret a selected passage from multiple angles, but also to relate present experience to past entries, values, and personal background. Participants often described this as helping previously disconnected experiences come together, and in some cases it led them to discover new understandings of themselves in moments they had previously considered trivial.

At the same time, such connections could not be supported simply by resurfacing entries with similar topics. When a retrieved past entry did not resonate with the current emotional state or concern, the resulting connection felt repetitive or superficial. This suggests that future reflection-support systems need to move beyond the question of what is similar and attend instead to why a given experience matters to this person now. Meaningful connections do not begin from linguistic similarity alone. They begin from personal significance, and only then can past records meaningfully extend present reflection.

Taken together, this project proposes not a way for AI to help people write journals more efficiently, but a structure that helps users interpret their experiences more deeply by catalyzing inner dialogue. Explicit yet provisional and incomplete interpretations, editable forms of intervention, interactions that can accommodate slight mismatch, and connection design grounded in personal significance all serve that larger direction. More broadly, the project suggests that future AI systems for reflection should be designed not around productivity or fluency, but around users’ interpretive agency and the depth of meaning-making.

5 Reflection

- The aesthetics of incompleteness and interaction with friction: One of the most important lessons in designing a service that incorporates capable AI was, paradoxically, deciding where to stop the AI from going further. Even though the LLM was capable of producing polished sentences instantly, I deliberately chose not to let it complete them. Instead, I designed the system so that users had to continue typing and gradually erase the highlight through their own edits. This showed me how a user’s sense of agency can be protected through incompleteness. At times, a small pause, revision, act of choosing, or moment of mismatch can produce a better experience than seamless automation. When working in intimate domains such as personal reflection, what requires the most careful design is not simple convenience, but the right degree of tension and openness that allows users to remain the subject of the process. This project led me to question the assumption that frictionless smoothness is always the ideal of UX, and to see designing thoughtful forms of tension as an important role for the designer.

- The importance of subtle interaction design: I also gained an important insight into how visual interaction design operates in AI systems. Elements such as the gradually fading highlight and the incomplete sentence form may appear technically small, but in practice they became central mechanisms for expressing authorship and agency. Through this experience, I came to see that interaction design in AI systems is not only about usability. It can also function as an ethical device that communicates whose voice is speaking and whose thinking remains at the center.

- The depth of insight made possible by qualitative research: I also learned a great deal from the research process itself. Quantitative indicators such as performance or click counts could not adequately explain which suggestions users accepted as their own, which they resisted, or why certain sentences felt comforting while others felt uncomfortable. One of the clearest examples was that users sometimes discovered their real feelings more strongly through an interpretation that felt slightly off than through one that offered perfect reassurance. These nuances of human-AI interaction in the wild are difficult to capture through quantitative data alone. The more subtle and intimate the relationship between humans and AI becomes, the more valuable in-depth qualitative research becomes as a way of understanding it.